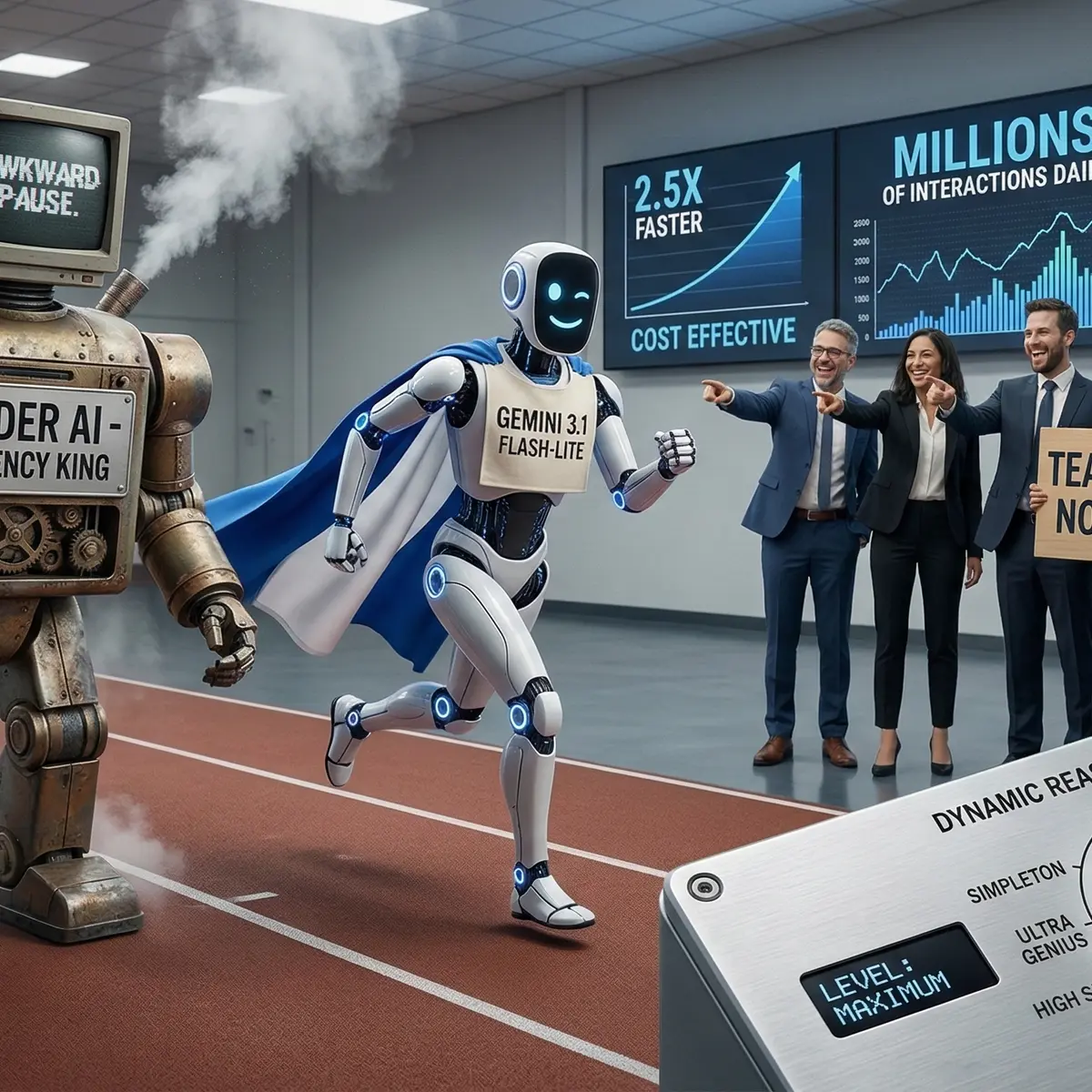

👀 Latency can make or break your user experience and Google's new Gemini 3.1 Flash-Lite model is tackling this head-on with a 2.5X faster 'time to first token', making customer support and real-time applications feel less like tools and more like teammates. While the 'Lite' might imply a trade-off, Flash-Lite's performance is anything but lightweight according to Google, offering a cost-effective, high-speed solution optimised for high-throughput AI tasks. With dynamic reasoning levels, we can dial up or down the cognitive load as needed, saving on cost without sacrificing capability. It's a smart move in the AI utility playbook, especially for enterprises managing millions of interactions daily. AI latency: the difference between a swift reply and an awkward pause.

#PromptEngineering #DeveloperProblems #BuildingWithAI